As one of the most prevalent and transformative technologies of all time, artificial intelligence (AI) has been

incorporated in various major industries since the term was first coined in the

1950's.

There are many new technological innovations

that are changing how we live our lives, but artificial intelligence, or AI,

may present the most exciting changes. While AI has been around for a while

now, recent improvements have made the technology much more adaptable. Looking

into the future, it’s easy to predict a world in which artificial intelligence

plays a more significant role in our daily lives.

Getting around with AI:

Self-driving cars are already beginning to

make their way on the roadways, but we can expect this technology to advance

considerably in the coming years. The U.S Department of transportation has

started making regulations about the use of AI-driven vehicles and, as a

result, they have designated three levels of self-driving vehicles. Currently,

we’re at the lowest level with Google’s version of the vehicle, which still

requires a human driver to be at the wheel. Ultimately, the goal is to create

an entirely automated self-driving car, which is expected to be much safer.

Logistics companies and public transportation services are also looking at

incorporating AI technology to create self-driving trucks, buses, taxis,

and planes.

AI and Robotics will come together:

The field of cybernetics has already begun to associate with

artificial intelligence and that trend is expected to continue. By

incorporating AI technology into robotics, we’ll soon be able to enhance our

own bodies, giving us greater strength, longevity, and endurance. While

cybernetics may help us enhance our healthy bodies, the application of this

technology is really aimed at helping the disabled. Those individuals, who have

cut off limbs or permanent paralysis, can be given a much higher quality of life.

Cybernetic limbs that can communicate with the brain can become almost as

useful as natural limbs. In the future, artificial limbs may even become

stronger, faster, and more efficient.

AI will

help to create Humanoid Robots:

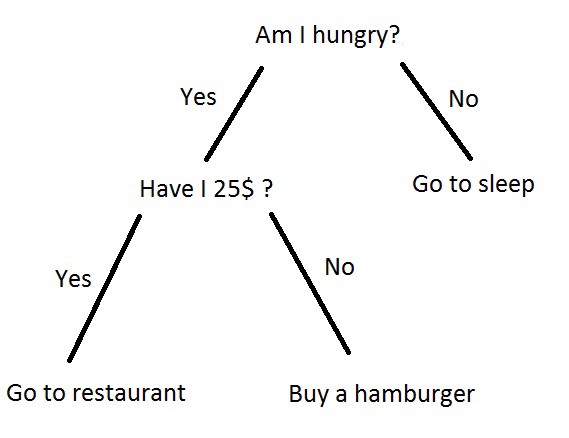

A lot of work,

finances and research are put into making these humanoid robots. The human body

is studied and examined first to get a clear picture of what is about to be

imitated. Then, one has to determine the task or purpose the humanoid is being

created for. Humanoid robots are created for several purposes. Some are created

strictly for experimental or research purposes. Others are created for

entertainment purposes. Some humanoids are created to carry out specific tasks

such as the tasks of a personal assistant using AI, help computer visioning,

and so on.

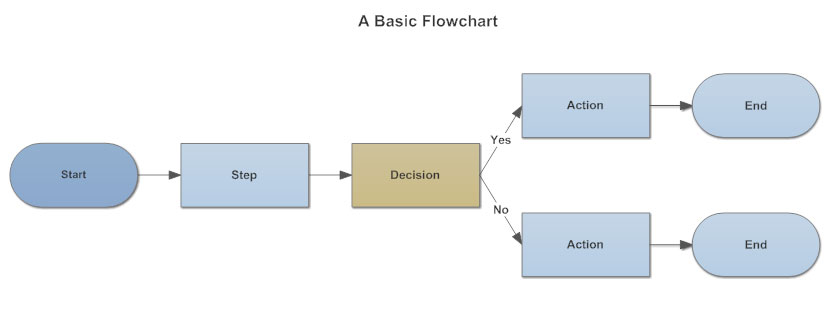

The next step

scientists and inventors have to take before a fully functional humanoid is

ready is creating mechanisms similar to human body parts and testing them.

Then, they have to go through the coding process which is one of the most vital

stages in creating a humanoid. Coding is the stage whereby these inventors

program the instructions and codes that would enable the humanoid to carry out

its functions and give answers when asked a question.

Although humanoid

robots are becoming very popular, inventors face a few challenges in creating

fully functional and realistic ones. Some of these challenges include:

· Actuators: These are the

motors that help in motion and making gestures. The human body is dynamic. You

can easily pick up a rock, toss it across the street, spin seven times and do

the waltz. All these can happen in the space of ten to fifteen seconds. To make

a humanoid robot, you need strong, efficient actuators that can imitate these

actions flexibly and within the same time frame or even less. The actuators

should be efficient enough to carry a wide range of actions.

· Sensors: These are what

help the humanoids to sense their environment. Humanoids need all the human

senses: touch, smell, sight, hearing and balance to function properly. The

hearing sensor is important for the humanoid to hear instructions, decipher

them and carry them out. The touch sensor prevents it from bumping into things

and causing self-damage. The humanoid needs a sensor to balance movement and

equally needs heat and pain sensors to know when it faces harm or is being

damaged. Facial sensors also need to be intact for the humanoid to make facial

expressions, and these sensors should be able to carry a wide range of

expressions.

Making sure that

these sensors are available and efficient is a hard task.

Facial

Recognition:

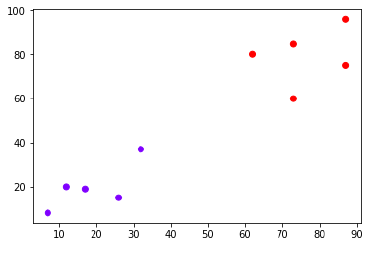

What

is a Facial Recognition System? In simple words, a Facial Recognition System

can be defined as a technology that can identify or verify a person from a

digital image or video source by comparing and analyzing patterns

based on the person’s facial contours.

Starting from the mid-1900's, scientists have been working on using

computers to recognize human faces. Face recognition has received substantial

attention from researchers due to its wide range applications in the real world.

WHY FACIAL RECOGNITION IS IMPORTANT?

Facial recognition is now

considered to have more advantages when compared to other biometric systems

like palm print and fingerprint since facial recognition doesn’t need any human

interaction and can be taken without a person’s knowledge which can be highly

useful in identifying the human activities found in various applications of

security like airport, criminal detection, face tracking, forensic, etc.

Your Face will become

your ID:

We’re already seeing bio

metric incorporated into our daily lives and that technology is expected

to evolve. Eventually, many in the tech industry anticipate AI-driven

applications allowing machines to recognize your face to complete transactions.

Your credit cards and driver’s license may be linked to your face, allowing

pattern recognition devices to know you instantly. This can make everyday

transactions far more efficient, saving us from having to wait in line at the

store, bank, or movie theater.

Receive better Medical care:

Research

is already underway to develop new software applications that use AI to help

doctors diagnose and treat patients. It won’t be long before wearable devices

can measure blood sugar levels for diabetics and transmit that data to the

patient’s doctor. Already, devices are in use that measure heart rate,

respiration, and other vital functions. Artificial intelligence may also help

patients better understand their care options and communicate more effectively

with their caregivers.

AI Response will be more Empathetic:

Internet users have

already seen the effect artificial intelligence has had when they visit a

website with a chat bot. In the past, chat bots were pre programmed to give

specific answers to specific inquiries. Today, artificial intelligence software

allows chat bots and virtual personal assistants to research any question and

provide an accurate response. While that is impressive, there’s still room for

advancement. Ultimately, AI-driven devices will analyze our speech or actions

to interpret our needs, so they can offer more insightful information. This

type of programming might best be described as “digital empathy” and it may

provide the best human-device interactions possible.

How AI Technology change our lives on a Personal level:

Artificial intelligence

has already made its way into many homes, but it will soon be indispensable in

most households. As we move closer toward becoming a technologically driven

society, AI applications will fulfill the promise that computers would make our

lives easier. AI technology will help us live happier and healthier lives,

while also helping us conserve time, energy, and money.

No comments:

Post a Comment

Blog Archive

Simple theme. Powered by Blogger.

Preview

Preview